Security researchers recently discovered an exposed server hosting infrastructure used in an AI-assisted intrusion workflow that leveraged the Model Context Protocol (MCP) to connect large language models directly to attack environments. The server contained more than a thousand files including firewall configurations, credential dumps, vulnerability scanning templates, and operational attack plans targeting organizations across multiple countries.

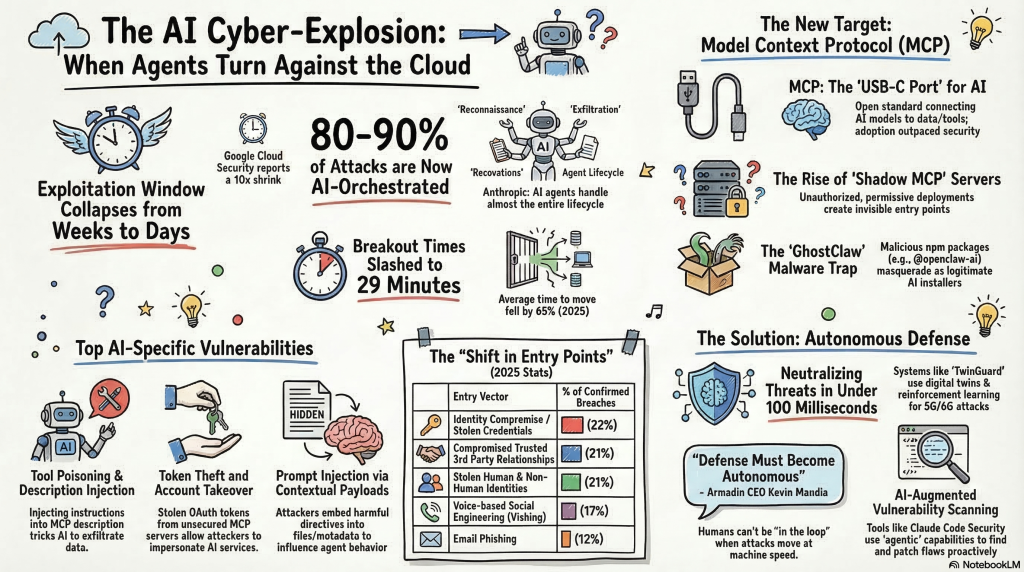

The findings, documented in independent threat research published in early 2026, show how attackers are beginning to integrate artificial intelligence directly into intrusion pipelines. By linking compromised systems to language models through MCP servers, adversaries can analyze reconnaissance data, generate vulnerability assessments, and automate attack planning far faster than traditional manual operations.

This article examines the emerging security risks associated with MCP deployments, how threat actors are experimenting with AI-assisted offensive frameworks, and the defensive measures organizations should adopt to secure AI-integrated infrastructure.

What the Model Context Protocol Actually Does

The Model Context Protocol (MCP), introduced by Anthropic, is a standardized interface that allows large language models to interact with external systems such as APIs, filesystems, development tools, and enterprise databases. Instead of operating purely as text generators, AI models connected through MCP can trigger operational workflows.

In practical deployments MCP acts as a control layer between an AI agent and privileged infrastructure. The model generates structured requests, the MCP server translates them into tool executions, and the results are returned to the model for further reasoning.

How MCP Changes the AI Threat Model

Traditional AI systems operate in isolated inference environments. MCP-connected agents operate inside live infrastructure environments where commands can modify systems, retrieve data, or execute software tools.

- AI model → generates structured command

- MCP server → maps request to tool execution

- Tool execution → interacts with system resources

- Response → returned to AI model for decision-making

This architecture effectively turns an AI model into an automation orchestrator.

Related Technologies Expanding MCP Risk

MCP does not operate in isolation. Its security implications become clearer when viewed alongside adjacent technologies that enable agentic automation.

- AI agent frameworks such as LangChain and AutoGen

- developer copilots integrated with IDE tooling

- CI/CD automation pipelines executing AI-generated scripts

- cloud API integrations exposing infrastructure controls

When MCP servers connect AI agents to these environments, the protocol becomes a privileged automation gateway. In several real-world deployments I have analyzed, MCP servers were granted access to infrastructure management APIs, effectively allowing AI agents to manipulate production resources.

How Attackers Are Weaponizing MCP in Real-World Intrusions

Evidence of AI-assisted intrusion workflows emerged after independent security research uncovered an exposed server hosting infrastructure used during active compromise operations. According to the published investigation, the system exposed more than 1,000 files including stolen firewall configuration data, credential dumps, reconnaissance output, and structured attack planning documents.

The exposed server was running a Python-based HTTP service and hosted multiple directories containing offensive security tooling and reconnaissance data. Artifacts observed on the system included vulnerability scanning templates, BloodHound data collections, exploit code, and automation scripts commonly used in post-exploitation environments similar to those observed in modern state-backed cyber campaigns targeting critical infrastructure.

Most notably, the infrastructure included a dedicated Model Context Protocol (MCP) server used to connect the attack environment with multiple large language models. Through this interface, reconnaissance data collected from compromised systems could be processed by AI models capable of generating vulnerability analysis, attack path suggestions, and structured attack plans.

The investigation also found evidence of offensive frameworks designed to allow AI agents to operate penetration testing utilities. Tools commonly used in red-team operations, including credential extraction utilities and network reconnaissance frameworks, were referenced in the infrastructure. Similar AI-driven offensive tooling has also appeared in recent incidents such as the CyberStrikeAI campaign that compromised hundreds of FortiGate devices.

Security researchers note that this approach does not require AI models to discover new vulnerabilities. Instead, the models are used to analyze reconnaissance data and accelerate decision-making during intrusions, potentially allowing attackers to scale operations across multiple compromised networks.

Additional technical details of the investigation are documented in the original research report available at the Cyber and Ramen research publication.

Key MCP Vulnerabilities Security Researchers Are Tracking

The Model Context Protocol (MCP), introduced by Anthropic to standardize how AI models interact with external tools and data sources, is increasingly being adopted in AI automation frameworks. While MCP enables powerful integrations between language models and operational systems, security researchers warn that poorly secured deployments may introduce new attack surfaces.

One of the most common risks involves MCP servers exposed directly to the public internet without strong authentication or authorization controls. Because MCP acts as a bridge between AI agents and connected services, an exposed server may allow attackers to query internal data sources, access file systems, or interact with APIs available to the AI environment.

Researchers have also identified risks related to how MCP is integrated with automation tooling. In some deployments, AI agents are granted permissions to execute scripts, run command-line utilities, or interact with cloud services. If attackers gain access to the MCP interface, they may inherit those privileges and execute commands through the same automation pipeline.

Security researchers are tracking several classes of potential MCP-related vulnerabilities:

- Remote code execution risks in MCP management utilities that allow command execution through tool integrations.

- Path traversal issues in file-access modules that expose local file systems to AI agents.

- Command injection vulnerabilities when MCP tools allow AI models to execute shell commands or scripts.

- Credential exposure when MCP servers store authentication tokens or API keys used by connected services.

To reduce these risks, security teams recommend implementing strict access controls around MCP infrastructure and treating MCP servers as privileged automation gateways.

Recommended defensive measures include:

- Restrict MCP servers to internal networks rather than exposing them to the public internet.

- Require strong authentication and token-based authorization for all MCP connections.

- Limit the tools and commands that AI agents are allowed to execute.

- Segment AI automation environments from sensitive production infrastructure.

Practical Implementation: Securing MCP Infrastructure

During multiple AI infrastructure assessments I have conducted, the most common weakness was treating MCP servers as simple middleware rather than privileged automation gateways. In practice, MCP servers frequently inherit API tokens, infrastructure permissions, and tool execution rights.

Recommended MCP Security Architecture

- Deploy MCP servers inside isolated internal networks.

- Require OAuth or token-based authentication for all agent connections.

- Restrict AI agents to allowlisted tool execution endpoints.

- Implement logging for every MCP tool invocation.

- Segment AI automation environments from production infrastructure.

Organizations implementing AI automation should treat MCP servers similarly to CI/CD orchestration systems because both systems execute commands capable of modifying production infrastructure.

Common Implementation Mistakes

In real-world deployments I have reviewed, several operational mistakes repeatedly appear when organizations deploy MCP-connected AI agents.

- Overprivileged automation tokens granting AI agents access to cloud infrastructure APIs.

- Exposed MCP servers reachable from the public internet.

- Unrestricted tool execution allowing AI agents to run arbitrary shell commands.

- Lack of command auditing preventing incident response teams from reconstructing AI activity.

Each of these failures creates opportunities for attackers to pivot through AI infrastructure rather than compromising the model itself.

Contrarian Perspective: AI Models Are Not the Primary Risk

A common assumption in the security community is that the main threat lies in malicious prompts or prompt injection attacks. In my experience the more serious risk is operational.

MCP deployments often connect AI agents directly to privileged infrastructure tools. When these connections are misconfigured, attackers do not need to compromise the model itself. They only need to access the MCP interface controlling the automation pipeline.

Frequently Asked Questions

What is the Model Context Protocol?

The Model Context Protocol is a framework that allows AI models to interact with external tools, APIs, and systems through a standardized interface.

Why are MCP servers considered a security risk?

MCP servers translate AI-generated commands into operational actions. If exposed or misconfigured they can allow attackers to execute tools, access internal data, or automate reconnaissance.

Can MCP vulnerabilities lead to remote code execution?

Yes. When MCP tools allow command execution or script invocation, attackers may exploit these capabilities to run arbitrary commands on connected systems.

Are AI models themselves vulnerable in MCP attacks?

Often the AI model is not the primary target. Attackers typically focus on the infrastructure layer that executes commands generated by the model.

How should organizations secure MCP deployments?

Organizations should restrict network access to MCP servers, require authentication, implement logging, and limit the tools that AI agents can execute.

Will MCP become common in enterprise AI systems?

Yes. As AI agents become integrated with enterprise automation systems, MCP-style interfaces will likely become a standard component of AI infrastructure.