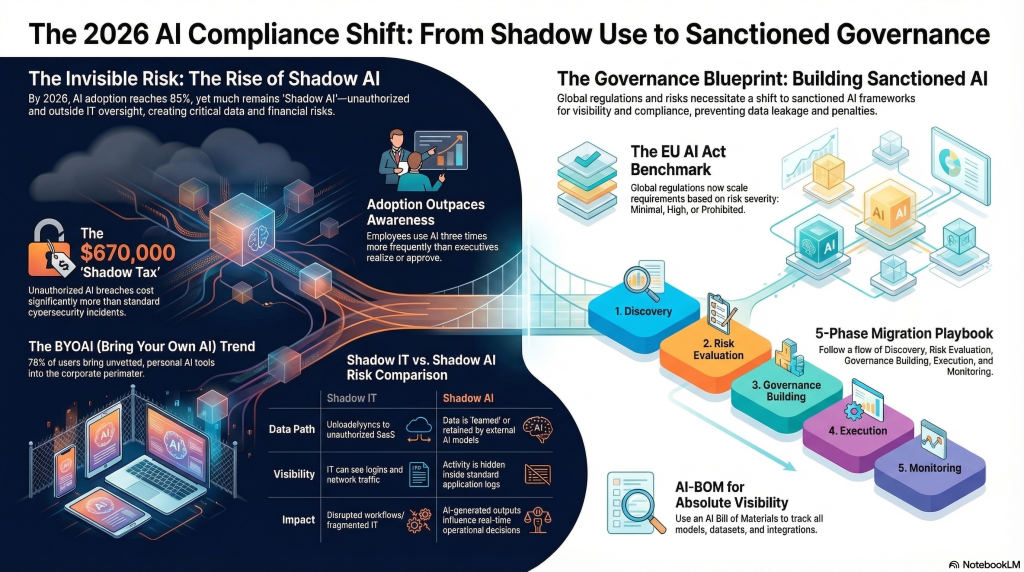

Shadow AI is no longer just a policy headache for CISOs. It is becoming one of the fastest-growing blind spots in enterprise security because employees are adopting AI tools, browser extensions, copilots, and autonomous agents faster than governance teams can track or control them.

IBM defines shadow AI as the unsanctioned use of artificial intelligence tools or applications by employees or end users without formal approval or oversight from IT.

That definition sounds simple, but the real-world problem is much bigger. Shadow AI now spans public chatbots used for work data, unofficial SaaS AI features, personal AI accounts used inside business workflows, and agents that quietly inherit access to email, documents, code repositories, and internal systems.

For defenders, the danger is not AI adoption itself. It is invisible AI adoption. Once security teams lose sight of which tools are being used, what data is being pasted into them, and what permissions those tools receive, shadow AI turns into a combined data exposure, identity, compliance, and governance problem. That makes it less like a fringe productivity trend and more like the next major enterprise control failure waiting to happen.

What Shadow AI Actually Means

At its core, shadow AI is the unsanctioned use of AI inside an organization. IBM describes it as the use of AI tools or applications by employees or end users without formal approval or oversight from the IT department. That includes obvious cases such as staff pasting company data into public chatbots, but it also includes less visible scenarios that are more dangerous in practice.

Today, shadow AI can appear as a browser extension summarizing internal pages, an AI note-taker connected to meeting platforms, a personal account used to analyze customer spreadsheets, a SaaS feature enabled without review, or an autonomous agent tied into internal data sources. In other words, shadow AI is no longer only about a chatbot tab open in a browser. It is about ungoverned AI capability operating inside business workflows.

This is where the old comparison to shadow IT starts to break down. Shadow IT usually describes unauthorized apps or services adopted without approval.

Shadow AI shares that DNA, but it also introduces model behavior, prompt leakage, agent autonomy, and access inheritance. Once an AI tool is allowed to read mailboxes, documents, code, tickets, or chat histories, the organization is not just dealing with unauthorized software. It is dealing with a system that can process, summarize, transform, and redistribute sensitive information at machine speed.

Why Shadow AI Is a Security Problem, Not Just a Policy Problem

The first and most obvious risk is data exposure. Employees often treat AI tools like harmless productivity apps, but prompts can contain customer records, internal strategy, legal drafts, source code, credentials, financial data, and incident details. Once that information is entered into an unsanctioned AI service, the organization may lose visibility over how it is stored, retained, processed, or reused.

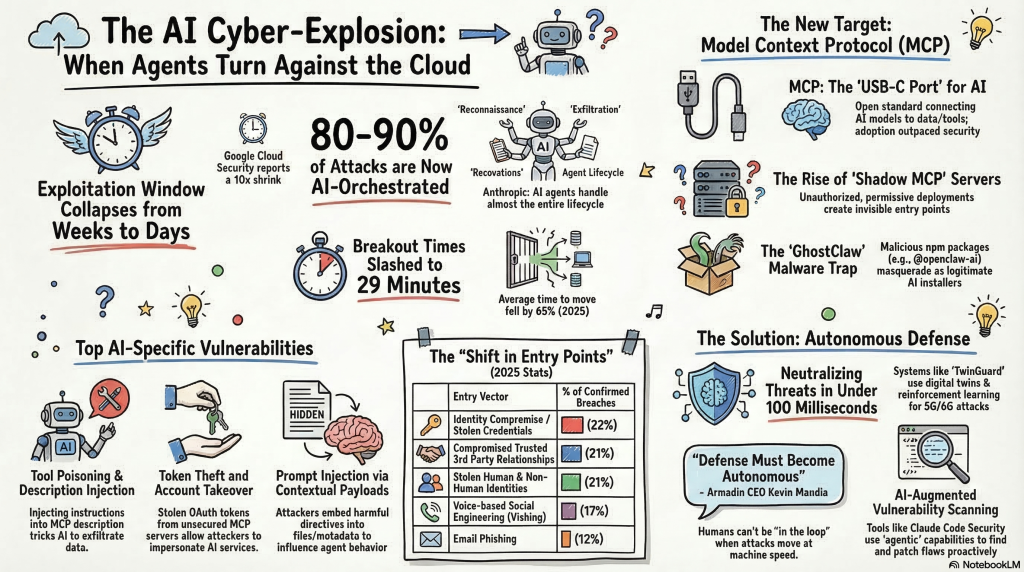

The second risk is identity and access sprawl. Shadow AI increasingly includes tools and agents that connect to enterprise platforms. A seemingly helpful assistant may be granted access to email, SharePoint, Git repositories, CRM systems, chat histories, or internal dashboards. That means the issue is no longer just what data a user pastes into a prompt. It is also what data an AI system can continuously pull, summarize, and act on once it is connected.

The third risk is compliance failure. If teams adopt AI tools outside approved controls, legal and security teams may not be able to demonstrate where regulated data went, whether retention requirements were followed, or whether vendor and residency obligations were met. Even without a headline-making breach, shadow AI can create an audit and governance nightmare.

There is also an integrity problem. Employees may trust AI output too quickly, use hallucinated information in reports or security decisions, or rely on untested agents inside sensitive workflows. That creates a subtler but still important risk: bad decisions made faster, with less review, under the illusion that the system is intelligent enough to be trusted.

How Security Teams Should Respond

The worst response to shadow AI is a blanket ban with no viable alternative. Employees adopt unsanctioned tools because they solve real workflow problems. If the organization offers no approved path, usage usually goes underground, which makes detection harder and governance weaker.

A better approach starts with visibility. Security teams need to identify which AI tools are being accessed, which browser extensions are present, which SaaS features are enabled, and which applications or agents have been granted OAuth-style access to enterprise data. That means treating shadow AI as a discovery problem first and an enforcement problem second.

Next comes governance. Organizations should define approved AI services, prohibited data classes, access review requirements, and rules for when AI systems can connect to internal mailboxes, file shares, code repositories, collaboration platforms, or customer systems. The goal is not to stop all AI use. It is to make AI use visible, governed, and defensible.

Least privilege matters here more than ever. An AI assistant or agent should not receive broad, standing access simply because it makes work more convenient. As Cyberwarzone has already highlighted in its coverage of local AI agents being hijacked and MCP-related AI security risks, the AI ecosystem expands attack surfaces quickly when trust outruns control.

The Real Question Is Not Whether Employees Use AI

Most enterprises are already past that point. Employees are using AI now, often daily, and often outside formal approval channels. The real security question is whether the organization can make that use visible before it turns into a data leak, an access-control problem, or a compliance failure.

Shadow AI is dangerous because it grows quietly. A prompt here, a browser extension there, a copied spreadsheet, a connected mailbox, an agent with too much access. None of these actions may look severe on their own. Together, they create an enterprise attack surface that traditional programs were not designed to see clearly.

That is why the organizations that handle shadow AI best will not be the ones that simply ban it the loudest.

They will be the ones that discover it early, govern it intelligently, limit its permissions, and give employees a secure path to use AI without creating a hidden security debt the business will pay for later.